RASKRASER

Creating a texture from multiple images, camera position and data of its rotation

Задача проекта:

Создание текстуры из множества изображений и данных о позиции и повороте камеры.

What we did and how we did it:

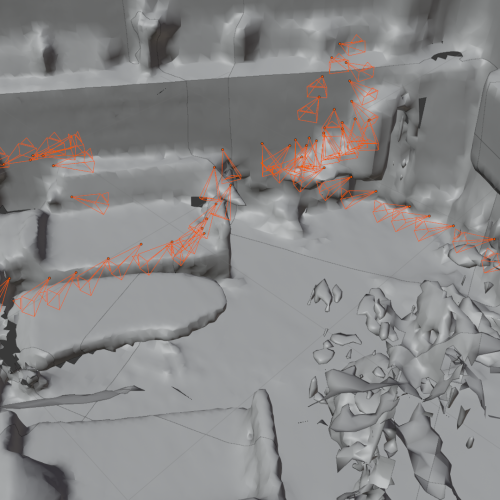

It might be described as the reverse rendering. A hierarchy of boudning volumes is built based on all triangular polygons of the scene. Rays for determining the visible polygons of the model are released from each camera position. By determining the visible polygon through barycentric transformations, the pixels that correspond to each other are found on the UV map and the image on the picture surface. The color from the image on the picture surface is transferred to the image with the UV map.

Specifics of the project:

It was initially implemented on Python Blender API and afterwards completely rewritten in Rust into a standalone application.

Year of the project implementation: 2020

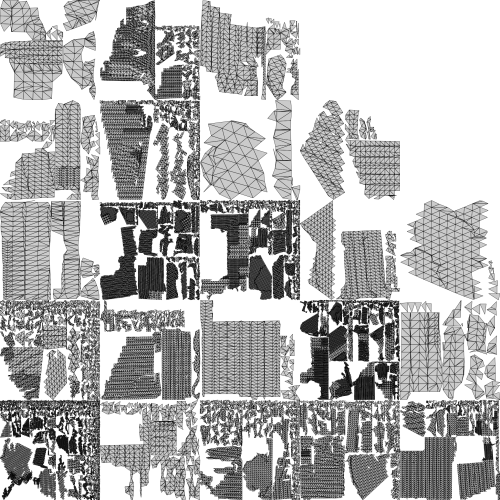

70 images are projected onto a single UV scan: